Pixel 6 Pro Selfie Camera Grainy

Google'south Pixels have always taken incredible photos. Even if they one time lagged behind in camera hardware, Google knew how to stretch every pixel to its limit. That's the science of computational photography, bringing us benefits similar the Pixel 6's improved selfie portrait mode, which tin even pick out individual strands of hair using the front-facing camera. Co-ordinate to Google, quite a lot of piece of work went into it.

Portrait modes like these take understandably ever been a lilliputian messy. They're trying to emulate the effect of a fast lens and a big sensor using limited hardware. But Google's had a few tricks over the years, from AI-powered "people pixel" detection to parsing "semantic" and "defocus" cues to augment phase-detection depth data. To further improve selfie portrait modes with the Pixel half dozen, Google had to railroad train new models that worked in new ways, and it had the improved AI workload functioning of Tensor to help.

If you can't find it, build it

AI models are but as good every bit the data used to railroad train them. That normally ways information technology's good to diversify your information sources as widely equally possible, but that tin likewise be an issue logistically. To start, Google needed a massive set of photos of people from all possible angles in all environments paired with perfectly accurate masking. The easiest way is to blended photos of perfectly masked-out people into existing backgrounds, only that introduces its own issues, as lighting for the private and the scene would vary, among other problems. So Google generated its own data sets in the aforementioned sort of style that it implemented its portrait lighting furnishings on the Pixel v.

Remember this?

Google busted out the Light Stage geodesic camera sphere information technology keeps in storage, and cheers to the hundreds of LEDs, an assortment of cameras, and custom high-resolution depth sensors, researchers were able to use it to capture examples with immaculately precise masking, perfectly separating people from the background.

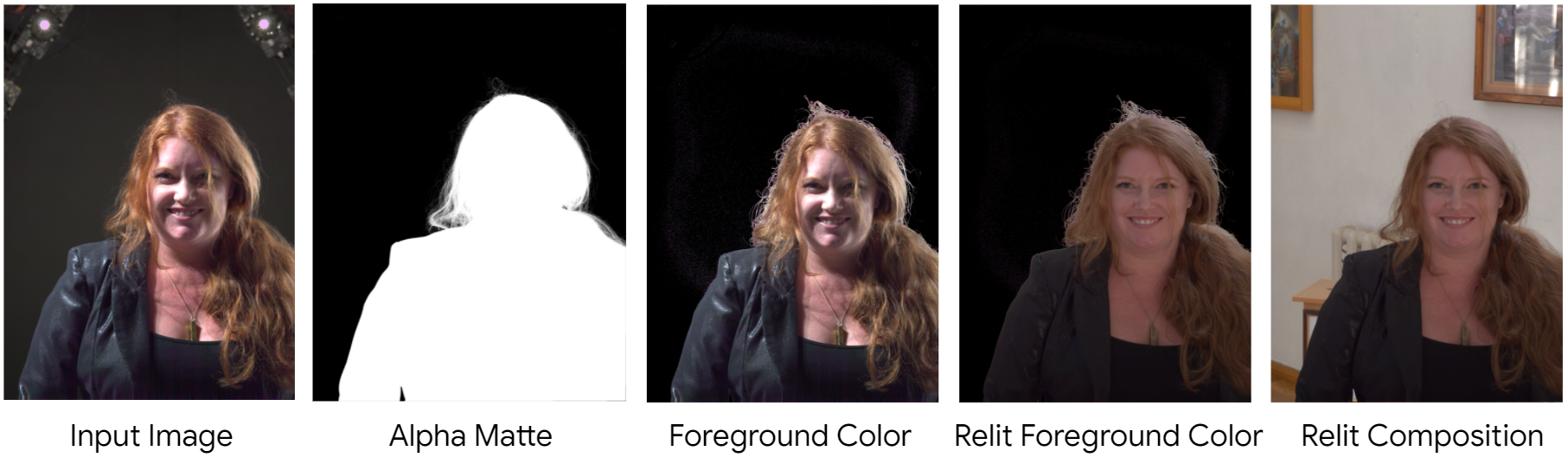

That might seem similar it's enough to kick off the computational magic, but this is simply the offset phase. Google used these high-quality images to create separate sets of photos it would use to railroad train the on-device model, taking the images it took of people under the sphere and compositing them with other backgrounds, relighting the portraits to lucifer the scene with the help of all that actress depth data, ray-tracing, and even a simulation of the optical distortion effects of a virtual camera.

All that extra piece of work ways the model is even more than prepared, not just to recognize the foreground, but also a wide diverseness of backgrounds and lighting conditions. Google arguably put more effort into creating the data sets used to railroad train the Pixel half-dozen's portrait mode model than really went into training it.

This was further seasoned with some "real" photos taken using Pixels in the wild, with a high accuracy model extracting like masking data and a visual inspection blessing only the highest-quality examples. Ultimately, Google used both information sets to railroad train the model to a broad multifariousness of scenes, poses, and people.

High-res, low-res, high-res

That model is only part of how the new Pixel 6 portrait style works, though, and Google has a few clever tricks up its sleeves to salve fourth dimension and resources.

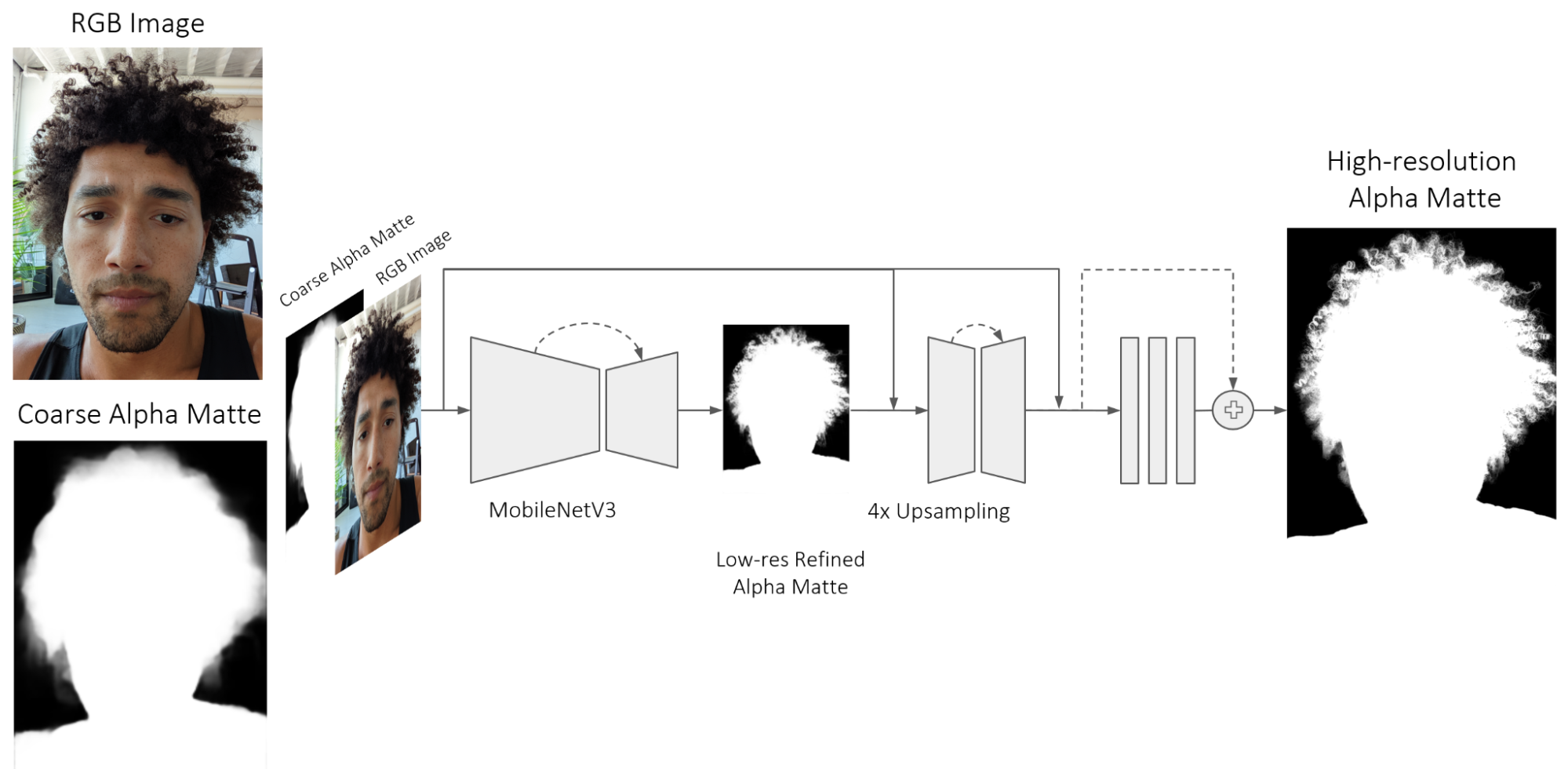

To kickoff, your Pixel 6 takes that selfie and processes up a coarse mask for the foreground — seemingly similar to the default approach most other portrait modes take. While many smartphone cameras stop at that place, Google farther feeds both the coarse mask and the photograph itself into the model we just discussed, and the output of that model is a more highly defined but lower resolution mask.

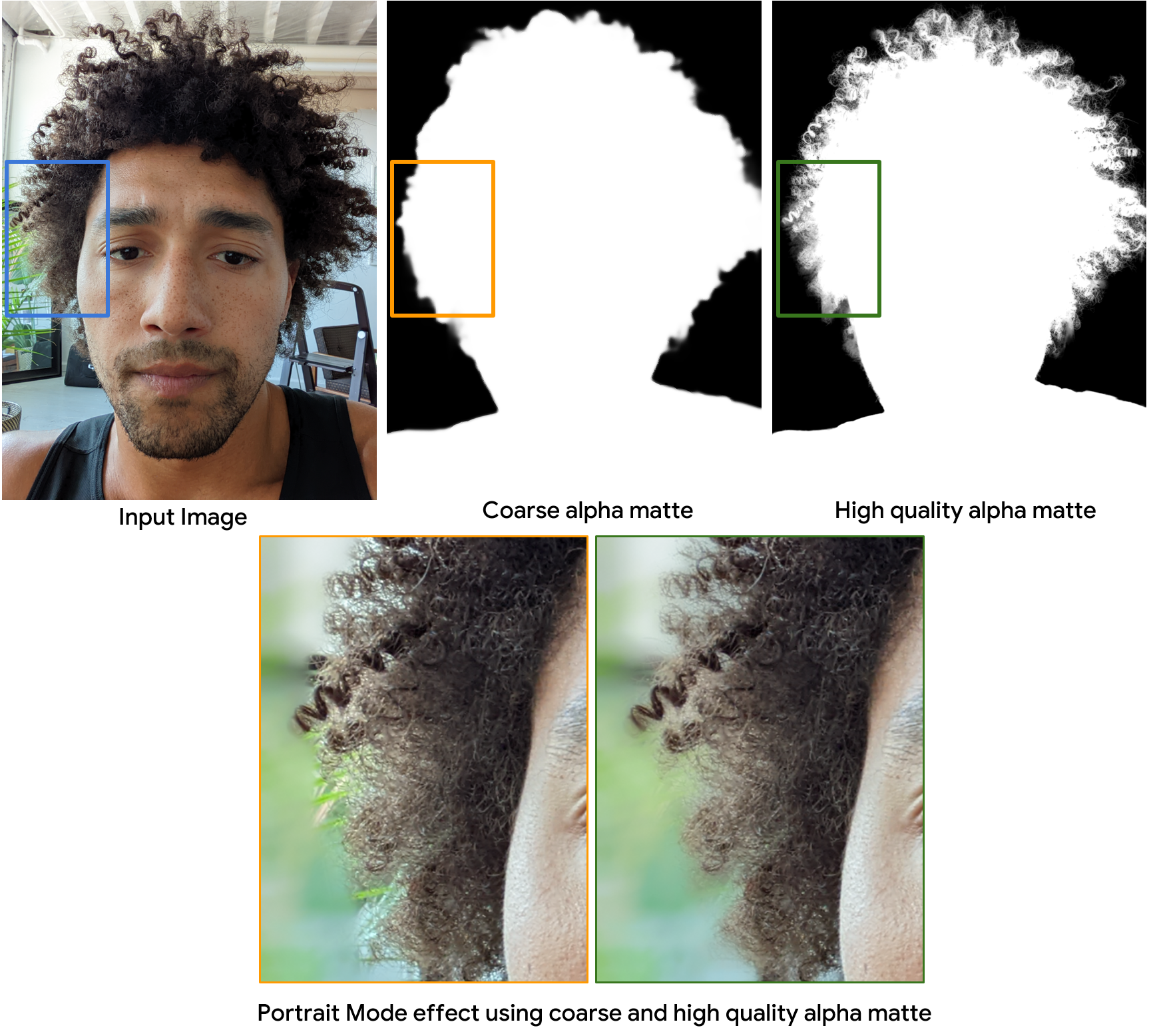

Models further refine the low-resolution refined mask into a loftier-resolution version, recursively referring back to both the original photograph and coarse mask. The steps sound sort of like the push-pull processing Google uses for Google Photos' denoising tool, but it works a little differently. In the end, a very fine and loftier-resolution mask is generated. And, using it, the portrait mode consequence is selectively applied, preserving the foreground while the groundwork is blurred. (Google even tries to estimate bokeh with information technology, though it's not perfect.)

Better portrait mode selfies on Pixel half dozen

Ultimately, this new model and pipeline have some big advantages. For one, the mask it tin create is much sharper and more than detailed, which means better and more accurate blurring effectually areas of intricate texture, like hair. Even semi-transparent details visible through a fringe of frizzy hair are less confusing for this new model. It'southward not just sharper, it'due south smoother.

The wider models this new portrait fashion is trained on likewise arrive better suited for a wider multifariousness of skin tones and hairstyles, farther affirming Google'due south Real Tone delivery for inclusion and equity. And, ultimately, it only ways better and more accurate blurry bits in portrait style selfies for all of us. It's yet not perfect — even some examples Google provided bear witness a few problem areas, and the gradient of the blur isn't every bit tack-sharp every bit a existent DSLR with a fast lens would be — only it'southward a lot closer to the ideal.

At ane fourth dimension, the camera attached to your smartphone was an reconsideration — a useful convenience and probably non something you'd make art with. Merely, every bit many photographers say, "the best camera is the i you have with you," and our phones are always with us, causing u.s. to demand more and more than from them. It's only thanks to the science of computational photography that smartphones are able to have such stellar photos with the tiny sensors they've got, and Google is on the bleeding edge of these developments — something every Pixel vi owner can capeesh.

Source: https://www.androidpolice.com/pixel-6-selfie-portrait-mode/

0 Response to "Pixel 6 Pro Selfie Camera Grainy"

Post a Comment